If you estimate parameters from ungrouped data (e.g. However - and this is a pretty big caveat, which quite a few books get wrong - those formulas actually only apply when the parameters are estimated from the grouped data.

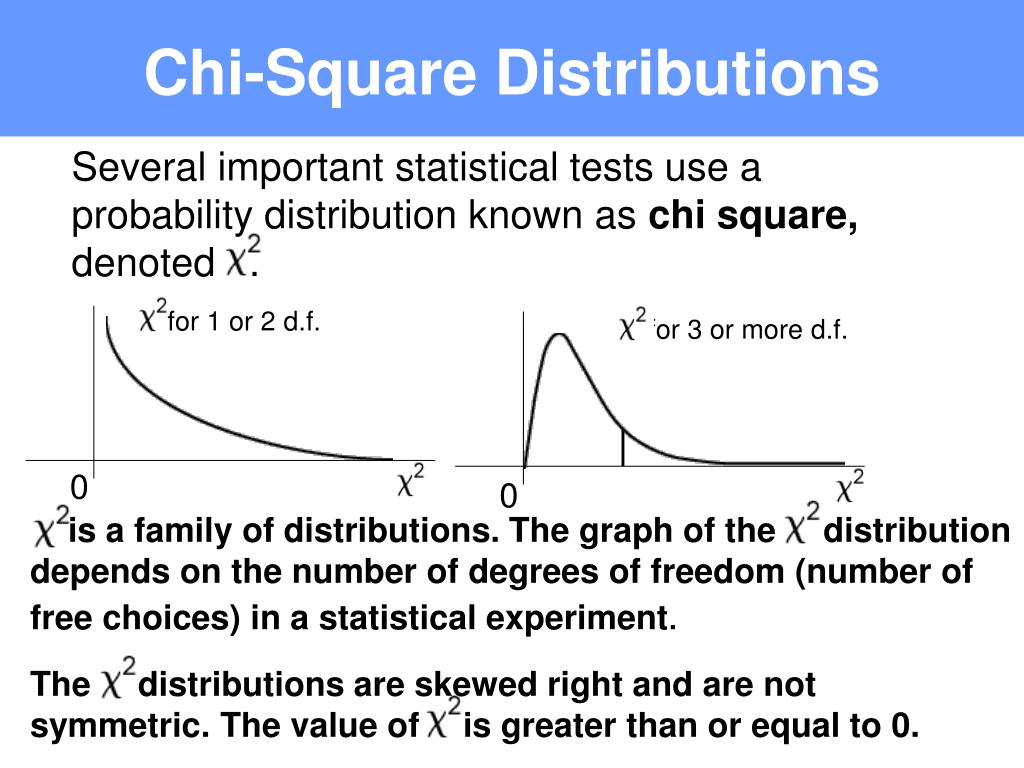

If you estimate one parameter, you'd subtract 2, and so on. If both parameters are specified, you'd only subtract 1. So for example, if you estimate both parameters of the normal, you'd normally subtract 3 d.f. Just count how many parameters you estimate, then add 1 when you use the total count. So all you need to do now is figure out how many parameters you estimate in each case and then include the 1 in the appropriate place for whichever formula you use (and that number of parameters is NOT always the same even if you test for the same distribution testing a Poisson(10) is not the same as testing a Poisson with unspecified $\lambda$). Which is to say, when you look properly, everyone agrees, since their definitions of $m$ differ by 1 in just the right way that they both give the same result. The ones that specify $k-m-1$ define $m$ in a way that doesn't include the total count.

Now if you look at their examples, the total count is included in $m$ quite explicitly (there's an example on the very same page they define their $m$ on). The difference from what you said that they say is critical, since the total count is something you calculate from the data. Miller and Freund actually specify that their $m$ is "the number of quantities obtained from the observed data that are needed to calculate the expected frequencies" (8th ed, p296).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed